While the data center boom has yet to have a major impact on the New England grid, increased interest from data center developers is fueling concern about potential effects on energy affordability and long-term resource adequacy.

The region already faces potential supply challenges in the 2030s due to electrification-driven load growth, potential resource retirements and the struggles of building offshore wind. ISO-NE forecasts its reserve margin will decline from about 17% in 2026 to 8% in 2034.

Accurately forecasting the scale of data center development in New England is a daunting task due to the speculative nature of many interconnection inquiries and the speed-to-market sought by most developers. But multiple major utilities have reported they have gigawatts of new load under study because of a sharp uptick in interconnection requests starting in early 2024.

If the high-end outcomes for electrification and data center development materialize, New England could face a rapidly tightening balance of supply and demand. This could exacerbate energy affordability issues and threaten decarbonization efforts.

In a power system with about a 26-GW peak, the possibility of gigawatt-scale data centers means these issues could materialize quickly and with limited warning.

Forecasting Uncertainty

Prior to 2024, developers of large data centers showed very little interest in New England, in part because of the region’s high energy costs and siting limitations.

“The number literally was zero in terms of large, hyperscale data centers anywhere on Eversource’s service territory,” said Jacob Lucas, vice president of transmission planning at Eversource Energy, which owns the largest transmission footprint in New England.

Since 2024, “we’ve had anywhere between about a gigawatt-and-a-half to 7 GW simultaneously under study,” he said, noting that the total amount of demand under study can fluctuate significantly based on projects entering or dropping out of the process.

He added that “every single request we’ve ever gotten has essentially been for a 24/7/365 max load.”

The cost and duration of initial large load interconnection studies vary depending on the level of granularity sought by developers. The studies tend to last between six and 12 months and cost from $100,000 to $500,000, Lucas said. To interconnect, large loads must undergo an additional, more extensive study process, coordinated with ISO-NE, to evaluate system impacts and determine the need for interconnection upgrades.

So far, no large load projects in Eversource’s queue have reached a final interconnection agreement since interest spiked in 2024, though one recent project reached the point of negotiating a construction agreement before the developers walked away, Lucas noted.

National Grid, the third-largest transmission owner in the region, has seen a relatively steady queue of around 2.5 GW since the start of 2024. Avangrid, the region’s second-largest transmission company, declined to comment.

While New England is just starting to grapple with the potential effects of hyperscale data centers, policymakers and officials have prepared for years for load growth associated with electrification and decarbonization.

In ISO-NE’s landmark 2050 Transmission Study, published in 2023, the RTO forecast New England’s peak load to roughly double over the next 25 years, with a cost of up to $26 billion. (See ISO-NE Prices Transmission Upgrades Needed by 2050: up to $26B.)

Although electrification has proceeded at a slower pace than ISO-NE projected in 2023, the RTO still forecasts substantial electrification over the long term and expects heating electrification to shift the region from a summer- to winter-peaking system in the mid-2030s.

ISO-NE is working to incorporate large loads into its 10-year demand forecast for the first time in 2026. The RTO plans to incorporate prospective large loads that are greater than 20 MW and under formal study. It proposes to derate the proposed nameplate capacity of these projects based on their stage of development and expected utilization rate.

The RTO’s 2026 draft forecast includes only about 110 MW of additional large load demand by 2035.

“Prospective large loads in New England remain limited in number and scale,” Victoria Rojo, supervisor of load forecasting for ISO-NE, noted at a February NEPOOL meeting. “Recent data collected from TOs suggests that there are a couple hundred megawatts of large loads in the formal study phase.”

But accurately predicting data center development 10 years out is extremely challenging. Just one large-scale project could dramatically change the outlook for the region, while the fate of individual projects may depend on the ebb and flow of global economics.

According to Lucas, large load projects studied by Eversource have ranged in size from about 100 to 1,000 MW. Uncertainty around which projects actually will materialize is “one of the big issues” in planning for data center development, he said.

Significantly overestimating demand could distort the market by creating a false appearance of resource adequacy issues, he said. But if a project on the higher end of the range is among the first to commit to development, ISO-NE’s estimate could be too low by a factor of five, he added.

Ultimately, ISO-NE’s introduction of large load demand into its forecast is a step in the right direction, he said, adding that the demand is “not going to be zero.”

ISO-NE has noted it has “limited visibility into detailed project information,” and data sharing “can be sensitive and is not ubiquitous across entities.”

To help address these issues, it has developed a quarterly survey for utilities to submit information on large loads, which should help the RTO “proactively characterize and incorporate large loads into the long-term load forecast.”

ISO-NE’s ongoing capacity market overhaul also may reduce some of the risk associated with forecasting uncertainty. FERC recently approved ISO-NE’s proposal to transition to a prompt capacity auction, cutting the time between auctions and commitment periods by about three years (ER26-925). (See FERC Approves ISO-NE Prompt Capacity Market.)

Prompt auctions will be held about a month before each yearlong commitment period, enabling the RTO to rely on more up-to-date information on supply and demand.

Increased demand forecasting accuracy “will be especially important when accounting for data centers and other large load proposals, which are often highly uncertain in terms of proposal attrition rates, relative construction time and electric demand characteristics,” the RTO told FERC.

But these changes would not address the fundamental issues that could occur if new demand significantly outpaces new supply in the region.

Energy Affordability

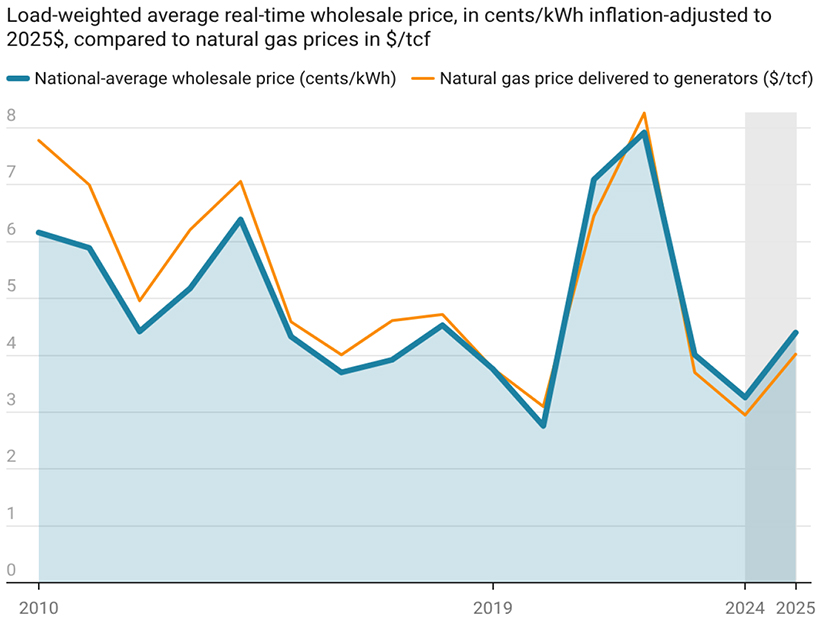

Affordability concerns have dominated energy policy discussions in New England since consumers were hit with price spikes in the winter of 2024/25. Costs remained high over the past winter, which was the most expensive winter in the history of ISO-NE’s wholesale markets. (See 2025/26 Most Expensive Winter in History of ISO-NE Markets.)

“Everybody is acutely aware that we are already in this affordability crunch,” said Noah Berman, senior policy advocate at the Acadia Center. With the potential for data center demand on the horizon, “legislators are thinking about this … and are trying to get out ahead of it.”

In PJM, the data center boom has contributed to a rapid increase in forecast demand and skyrocketing capacity prices in recent capacity auctions. (See PJM Capacity Prices Hit $329/MW-day Price Cap.) Nationally, data center demand also drove increased coal-fired generation and overall power sector emissions in 2025.

“People are seeing what’s happening in PJM … in PJM [data centers] are absolutely causing price spikes,” Berman said.

Even without new data centers, New England could face power supply issues starting in the mid-2030s.

“We are, as a region, really struggling to build new generation,” said Lucas of Eversource. “The looming issue New England has … is capacity shortage.”

Adding a few large data centers to this equation is “just going to make that worse,” he said.

To prevent negative impacts on consumers and the climate, some advocates argue regulators must require data center developers to procure enough new carbon-free generation to meet their demand.

But the data center industry has opposed these mandates. Lucas Fykes, director of energy policy at the Data Center Coalition, said data centers should not be required to bring their own supply. He stressed the importance of developing accurate demand forecasts and said it should be up to states and utilities to figure out how best to ensure resource adequacy.

Interconnecting large data centers also could require significant upgrades to the region’s transmission system. Drew Landry, Maine deputy public advocate, said the region must work to ensure data center developers are accountable for all costs of the system upgrades required to interconnect their facilities.

He said the data center boom appears to be “a bit of a gold rush situation,” with developers scrambling to advance projects which ultimately may fail. The potential for stranded projects, he said, “raises the potential for stranded costs.”

To protect against this risk, ISO-NE and utilities should collect as much money upfront as possible to fund the upgrades, he said.

Across New England, the transmission and energy supply concerns are starting to translate into legislation.

A bill (H.5175) passed by the Massachusetts House of Representatives in late February would require data centers with load larger than 20 MW to procure at least 80% renewable energy. The bill also would direct electric utilities to establish specific data center tariffs designed to “ensure that non-data center ratepayers are protected from any increased costs that result from increased electricity demand.”

In late March, the Vermont House of Representatives passed a bill (H.727) similarly creating a separate ratepayer class for data centers over 20 MW. In the siting process, data centers would have to prove to the Vermont Public Utility Commission that development “will promote the general good of the state” and will not adversely affect other ratepayers.

Democrats in Rhode Island also have introduced legislation (S.2427) to create a new retail customer class for data centers intended to protect against cost shifts.

And in Maine, a temporary data center moratorium recently passed by the Maine House would pause development in the state until November 2027. The bill appears likely to receive support from the Senate and Gov. Janet Mills (D).

Blowback

The data center boom also has been met with growing grassroots backlash, with opponents successfully blocking or delaying projects throughout the country. New England is no exception.

For opponents, data centers can represent a physical embodiment of unconstrained capitalism, Big Tech, inequality, environmental degradation and AI slop.

“There is a real extractive relationship between data centers and local communities,” said Dana Colihan, co-executive director of Slingshot, an environmental justice nonprofit. “These facilities are primarily benefiting wealthy corporations, not everyday folks.”

At a March meeting of the ISO-NE Consumer Liaison Group — one of the region’s few forums that convenes grassroots activists, ISO-NE officials and state and industry representatives — Vermont-based activists sent a blunt message to any data center developers eyeing the state.

“If anyone tries to build data centers here, we will drive them out,” one speaker said.

Data center opponents have scored several wins in local battles in recent months.

In Lewiston, Maine, city councilors voted to kill a data center development plan after intense local opposition.

In Wiscasset, Maine, the selectboard voted to pause early-stage discussions about the development of a data center on town-owned land amid backlash from the community.

And in Lowell, Mass., the city council passed a one-year moratorium on data center development or expansion amid the Markley Group’s efforts to expand an existing data center in a residential neighborhood. Local residents have complained about noise and air pollution from the facility and have legally challenged an air permit approval allowing Markley to add eight backup diesel generators to the facility.

Public debates over the moratorium pitted union electrical workers against environmentalists and neighbors. One city councilor compared her decision on the moratorium vote to choosing a favorite child.

“I think community engagement is often key to determining if projects move forward and move forward well, and ensuring mitigation measures are put in place,” said Anxhela Mile, staff attorney for the Conservation Law Foundation.

Data center proponents argue development is essential to maintaining U.S. economic competitiveness. They point to increased tax revenue and job creation benefiting local communities.

Data centers can bring millions of dollars in tax benefits while maintaining the “small-town feel” of rural areas, Fykes said. “Many of our members are focused on being good stewards of the community.”

A 2024 economic development law passed in Massachusetts included significant sales tax exemptions for data centers. The state finalized the tax breaks on March 27, authorizing a sales tax exemption for equipment, software, electricity use and construction costs for data center facilities.

To qualify, the law requires data centers to employ at least 100 full-time workers in the state, but the regulations do not include substantial ratepayer or environmental protections.

“If you’re going to incentivize these companies to come here, make sure you’re doing it correctly,” Mile said, noting that CLF was one of a handful of groups to voice concern about the lack of consumer and environmental protections during the legislative process.

“I think a lot of groups were caught off guard with it,” she said. “It just seemed like it just kind of slipped through.”

But the political climate has shifted since the passage of the law, with energy affordability taking precedence and local groups mobilizing against data center development. Future legislation may prove to be far more controversial.