When it comes to the grid, can artificial intelligence be two things at once? Demand planners and climate realists see AI as the bad guy, driving up demand and grinding decarbonization goals into the ground. Yet industry leaders and techno-optimists believe it can be the good guy.

I met with some of Silicon Valley’s biggest brains recently to unpack this complex question — and, critically, whether AI can be the good guy while meeting FERC-approved NERC Critical Infrastructure Protection (CIP) standards.

There’s no doubt AI could be exponentially faster, smarter, more innovative and more efficient than the current workforce, but can it be reliable? We’ve all heard of AI hallucination in everything from government reports to research papers, but when you are dealing with HVDC, errors can have life-and-death consequences.

If the industry does not quickly define “reliable AI” and build guardrails around its deployment, we risk importing stochastic behavior into systems designed for determinism.

That is not a technology debate. It is a reliability and safety debate. And it is one RTOs, regulators, grid and power plant operators, and utilities cannot afford to postpone.

The AI-Enabled Vision for the Future Grid

AI can optimize transmission and distribution, move more electrons through the same wires, free workers from mundane tasks, and manage preemptive maintenance. And there are ways it can help the grid that we are only beginning to imagine.

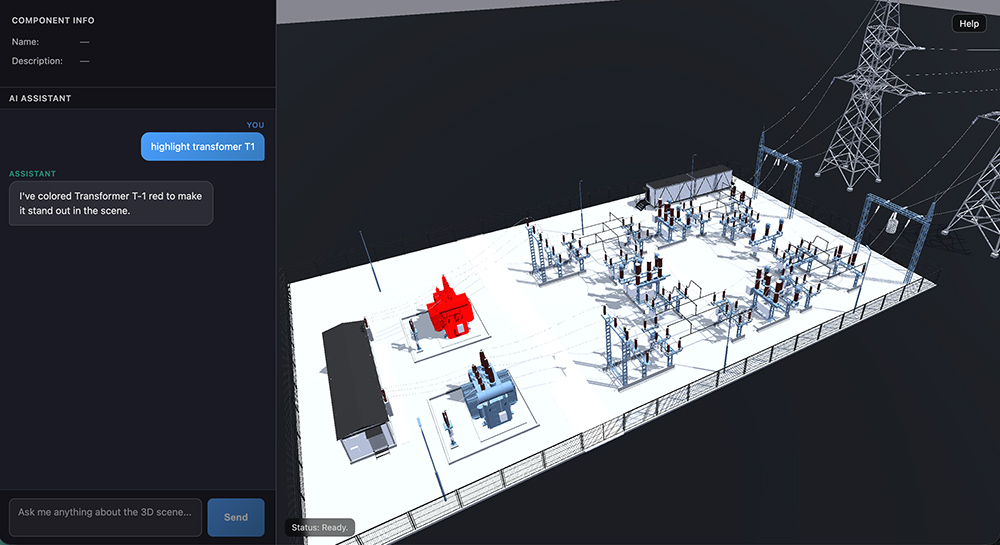

One of the most interesting use cases is building photorealistic digital twins of nuclear power plants and substations to enable remote operations and maintenance, which I’ll dig into more later.

Another application involves drones analyzing snow-cloaked power lines without waiting for a winter storm to provide the training environment. AI not only creates images of the storm-that-doesn’t-exist but also teaches the system what to look for in the white-on-white, post-storm landscape from all the angles an experienced drone pilot would use. The goal: eliminate the need to wait until roads are accessible before lines can be inspected. Grid operators will be able to keep drones in habs (the boxes drones call home) near HVDC towers so they can deploy after storms and for regular maintenance.

There also are ways to make AI less power-hungry, at least in an electron sense. AI may still want to rule over its human underlings but can do so more efficiently with better data compression.

Why AI Sometimes Gets it Wrong

AI is ubiquitous: You can’t do a simple web search without an AI-generated summary popping up. Sometimes it’s great. Other times it’s amusingly wrong.

“When I ask it to search the web, nine out of 10 times it gets the right source with the right quote. But 10%, I go to the link that it showed, and it doesn’t exist,” said Yuriy Yuzifovich, chief technology officer of enterprise AI at GlobalLogic, while referring to a popular AI assistant.

Users refer to AI errors as hallucinations. When I was using early versions of ChatGPT to research technical topics, rather than admit “I can’t find any sources for that,” the eager-to-please AI would sometimes invent research papers. If I’m searching for sources for an RTO Insider article, that hallucination is an annoyance. I correct it and move on.

And here’s the part that’s poorly understood in the public conversation: The large language models (LLMs) that power consumer AI tools are built to behave this way. They are intentionally probabilistic.

Hallucinations and HVDC Don’t Mix

You have to go inside LLMs to understand how and why it can deliver errors with such confidence.

LLMs “understand” the world with tokens and vectors. Tokens break language down into small chunks that AI models read, while vectors represent those tokens in a numerical, high-dimensional way that give each token context and relationships with similar tokens. If “All-Bran” were a token, its vector would tell you it’s in aisle 5 on the third shelf with all the other cereals. AI constructs answers to questions by stringing together a series of tokens it has found using vectors.

Continuing to use a three-dimensional analogy to simplify the multidimensional space that AI operates in, imagine AI is doing your shopping. It enters the supermarket, looking where the vector has directed it. At the third shelf of aisle 5, it grabs the closest box of cereal with bran in its name. Because it is closer than the proper target and similar enough, the model assumes it’s right and stops looking.

As it gives you bran flakes, it hasn’t made a wild guess, but it has delivered something any fan of All-Bran (yes, they exist) would consider wildly, soggily wrong.

“The randomness of the answer is built in. It’s a feature. It’s not a bug,” Yuzifovich said.

In some cases, such as writing a conference presentation, that randomness can look like creativity. But the stakes are higher in the electricity sector. The grid, especially the HVDC side, operates with life-and-death stakes. If AI was advising one of your control room operators during a switching event, or guiding a field crew during storm restoration, that “nine out of 10 times” isn’t innovation. It’s an unacceptable risk.

Does this mean there’s no space for AI in managing critical infrastructure? The problem is most public discourse treats “AI” as a monolithic technology. It isn’t. And if regulators and operators fail to draw sharper distinctions, we risk regulating the wrong thing while deploying tools that introduce the instability we work hard to eliminate.

When ‘Good Enough’ AI Isn’t Close to Good Enough

Utilities have been pitched an avalanche of AI solutions over the past few years: copilots for engineers, chatbot overlays for procedures, systems that promise to answer questions from internal manuals.

On slides, it looks compelling, but in practice, the results have been uneven.

“Most of these organizations love the idea that you’re going to do something with their data and get some magic beans of intelligence back,” Malcolm Hay, GlobalLogic’s vice president of energy, said. “There have been a lot of proof of concepts done that are kind of ‘meh.’ They deliver a bit,” he said, but are not accurate enough to be relied on in operational settings that can’t tolerate any kind of ambiguity. “Any hallucinations … would destroy confidence and obviously [pose] security and safety risks.”

Retrieval-augmented generation (RAG) is a perfect example of this gap between promise and performance. Instead of letting a model roam the internet, you point it at curated internal documents. The failure mode, however, is often indistinguishable.

“RAG is similar to ChatGPT, but instead of the internet, you give your documents,” Yuzifovich said. “The results are very similar: most of the time it gets it right, but sometimes it doesn’t — and when it doesn’t, you don’t know when.”

That last clause is the operational killer. If a human has to verify every output, the efficiency case collapses.

Meanwhile, workforce pressures are intensifying. During one storm restoration workshop, a utility described line workers running 16-hour shifts after a major event. “We need to really help them and give them the tools they need to support us,” said Carlos Elena-Lenz, vice president for digital enablement and transformation at Hitachi Energy.

Those tools cannot be probabilistic guessers.

What ‘Reliable AI’ Actually Means

In the aerospace world, craft or launch vehicles that carry people are called “human-rated” in contrast to vehicles that carry a non-human payload. They are designed to a higher standard because their failure has more significant consequences. It’s a concept that easily translates to the energy world. Every day, crews work with a complex system that, if mishandled, could have fatal consequences.

In safety-critical environments, the definition of AI success is radically different from Silicon Valley’s definition.

Four requirements for “Reliable AI” surfaced repeatedly in my conversations.

1. Deterministic, not Stochastic

If you ask the same question under the same conditions, you have to get the same answer every time. “It’s very important to show … on a small subset … that no matter how many times you’re asking the same question, it’s the same answer,” Yuzifovich said. Variability is intolerable in a control room.

2. Grounded in Structured Knowledge

The foundation of reliable AI is not linguistic fluency; it is structured domain knowledge. “Knowledge is just a collection of interconnected knowledge: This fact is related to this fact,” Yuzifovich said. “With LLMs, we can finally produce enormous amounts of this knowledge as code.”

This is not “upload your PDFs and hope.” It requires iterative extraction, validation and SME oversight, and multiple passes of extraction with a human in the loop to codify critical rules.

3. Able to Say ‘I Don’t Know’

Safety-critical systems must value accuracy over giving an answer. It needs to know when it doesn’t know and never guess. In other words, we want a Hal that will say, “I’m sorry, Dave. I’m afraid I can’t do that.” An “I don’t know” state is not weakness. It is governance.

4. Traceable and Auditable

When an AI suggests an action, operators must see the chain of reasoning and trace back as far as needed, even if it means going back to the source documents that contain the standard operating procedures. Mainstream AI models often are opaque by design, but grid-grade AI must be engineered for auditability. The system must behave like a senior engineer, not just a librarian. It must be grounded in rules, not merely fluent in text.

Building the World of Truth

As part of the nation’s critical infrastructure, utilities, power plant owners and grid operators must isolate their data systems from external sources to ensure cybersecurity. That means even if some of the data exists on the web, it’s not accessed there. For example, the installation and commissioning manual for a transformer may exist on the manufacturer’s website, but it will need to be replicated inside a secure system for internal use.

To create “knowledge as code,” everything gets uploaded, Renan Giovanini, chief technology officer of energy business at GlobalLogic, said. And by everything, he means everything: the original RFQ with specs, the operations manuals of every component, the history of faults and repairs.

For a greenfield plant, that’s probably already digitized and easily uploaded. However, for a 70-year-old substation with transformers as old as the average worker, creating the knowledge base requires sifting through warehouses of records and digitizing the handwritten notes of electricians who have serviced the plant over the years.

The sheer magnitude of the task may seem overwhelming and industry players will need to believe there will be a real return on that investment. The challenge for technology providers will be to help customers make the case for the long-term benefits the considerable investment will deliver.

From Chatbots to Operational Systems

The public narrative around AI remains chatbot-centric. Inside utilities and OEM environments, the picture looks very different. In one nuclear maintenance platform demonstration, a GlobalLogic project that is live today, engineers built a detailed digital twin tied directly to procedures and asset data.

“The customer wanted to have a solution to better coordinate their maintenance teams, so they could remotely get together and plan for work,” Giovanini said. “They had team members across the globe, that, in the past, would go to a [Microsoft] Teams meeting.” The challenge would come as soon as people on the call needed the position of a particular piece of equipment or access to a user guide. “We worked with them to create a digital twin representation of the nuclear power plant and enrich it with many different data sets, user guides, maintenance procedures and so forth.”

The representation replicates the plant’s facilities in an immersive metaverse, enabling remote access, real-time collaboration and AI-powered operations management. The visual layer is important, but the real innovation is underneath: structured rule enforcement tied to plant documentation.

In a demonstration of a substation digital twin under development, those structured rules became concrete: an operator instructed the AI to open a disconnector. The system refused.

“I cannot open this disconnector because there is a circuit breaker that must be opened first,” it replied.

The rule was not manually coded by a software engineer.

“What we take is a standard operating procedure from the utility that gets ingested by a knowledge database. There is no need now for a software engineer to transform that into rules,” Giovanini said.

It enables procedural enforcement at machine speed.

In storm response, similar pipelines are emerging, as in the example of the inspection drones. “We started using synthetic data generation to create snowy scenes and we’re feeding them into our computer vision model,” Elena-Lenz said.

Beyond having a fleet of drones that can inspect and report on damage, the sensing-to-decision architecture should be able to collate the damage reports, prioritize them, and then feed into a workforce management platform that can assign the work based on the crew’s locations, tools and capacity, he said.

The Human Knowledge Emergency

The most urgent case for reliable AI is not automation. It is retention. The industry is facing a crisis as the generation that holds deep experience retires.

“We’re losing this context for these old systems,” Giovanini said. “By capturing this knowledge into a digital format, that tribal knowledge now will be part of this utility or company knowledge space.”

Another engineer described veterans who can diagnose issues by sound alone. “They can go out to a substation and just by listening tell you if everything is working. You can’t create software that beats decades of knowledge.” Yet the industry must capture as much of that knowledge as it can before it loses that experience, and possibly train systems in ways no one planned, such as audio detection of certain fault types.

Reliable AI, in this context, becomes a continuity strategy, embedding institutional memory into auditable systems.

Planning for an AI-enabled Future

By the end of a dozen conversations and demonstrations, I’d laid my dun-colored climate realist glasses to one side and donned a techno-optimist’s hat. AI data centers’ demand may create a challenge for the grid, one that will put emissions reduction goals on the back burner in a way that I find hard to stomach, but that doesn’t mean the industry won’t also benefit from it.

The select few examples of how AI can multiply human capabilities and preserve human experience for generations to come barely scratch the surface. There are many ways AI can help the industry become faster, smarter, safer and more responsive at getting more out of existing assets.

But regulators and industry leaders need to ensure the industry maintains the highest standards in this rapidly evolving digital world.

“Reliable AI” guardrails are essential, not only to meet NERC’s CIP requirements, but to also continue protecting the grid and the people who work on it and live near it in the conservative, risk-averse way the industry holds as sacred.

Power Play Columnist Dej Knuckey is a climate and energy writer with decades of industry experience.